Why I Don’t Use Social Media is a three-part series exploring my relationship with online connection, how social networking slowly became social media, and why I eventually stepped away. This isn’t a rage-quit or a moral sermon. It’s simply an honest account of what changed, what was lost, and what I gained in the quiet.

In Part II, I looked back at a time when online social spaces felt like rooms. Chat rooms, forums, and even MySpace had their own cultures, their own pace, and their own sense of community. Even when things weren’t perfect, it still felt like people were meeting each other on relatively human terms.

But somewhere along the timeline, the entire purpose of being online began to shift.

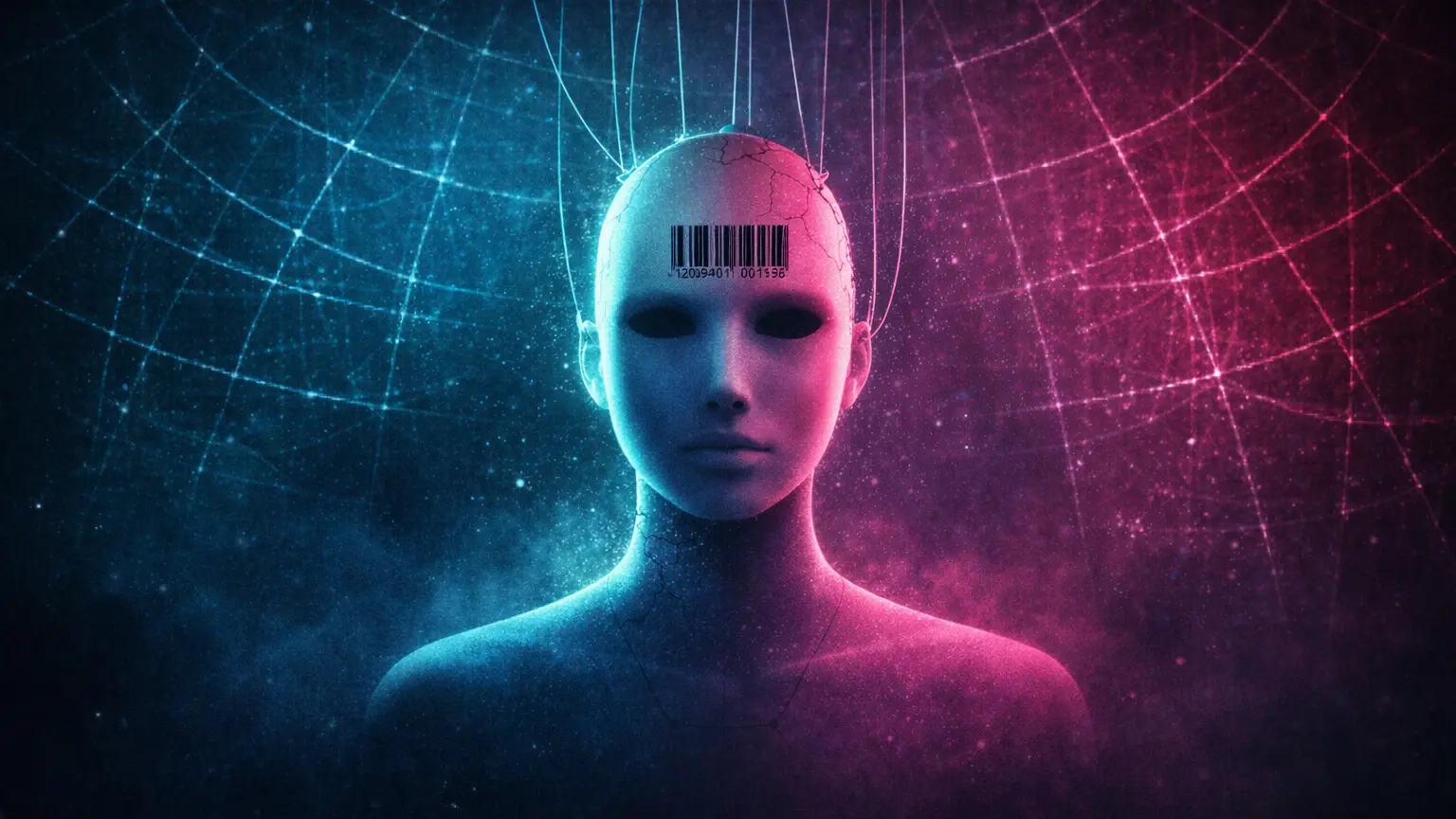

Connection started turning into visibility. Expression started turning into optimisation. People stopped showing up to talk, and started showing up to be seen. It’s hard to pinpoint exactly when this became the default, partly because it happened gradually, and partly because it was sold to us as progress.

But the end result is obvious.

“Social networking” didn’t simply evolve into “social media”. It was quietly redesigned into it. The language changed, the priorities changed, and most people barely noticed because it didn’t arrive as an announcement. It arrived as a new normal, shaped by platforms whose incentives were never neutral.

Memes and the Compression of Thought

Not long after Facebook became globally dominant, memes started becoming a major part of online culture. Memes, at least in the modern sense, are basically humour condensed into a single image, often paired with a caption. They spread quickly, they evolve through repetition, and they offer easy social hooks for people to build on.

Some memes are genuinely funny. Some are clever. Some are even oddly insightful in a compressed, sideways sort of way. But early on, I found myself uneasy with the format.

It wasn’t that I didn’t understand the humour. It wasn’t even that I disliked the jokes themselves. I just didn’t like what the format was doing to communication. It felt like conversation being flattened into a template, and personality being squeezed through pre-approved shapes.

I remember posting on Facebook when memes were first becoming common, basically asking if it was just me, or if they were making the world stupider. I wasn’t trying to be edgy, and I wasn’t trying to provoke anyone. I was reacting to something I found culturally strange and trying to put it into words.

I got shouted down instantly. My opinion was wildly unpopular.

Looking back, what stands out isn’t even the disagreement, it’s the speed and certainty of the backlash. It felt like a warning shot. Like certain kinds of critical thought were becoming socially unwelcome, especially if they threatened the fun, the vibe, or the collective agreement that keeps a platform frictionless.

Memes were not the worst thing to happen to the internet, but they were a sign of the direction things were moving in. Communication was being compressed, simplified, and optimised for rapid sharing rather than meaningful exchange.

When “Social Networking” Quietly Became “Social Media”

For a while, the shift was subtle. Different platforms emerged with different vibes, and people migrated depending on what suited them. Twitter was a big one, and to me it always looked like a fast-moving update stream, with a heavy focus on public figures and bite-sized commentary. I never signed up for it, not because I thought I was above it, but because I could already feel what kind of mental environment it would create for me.

Then came Instagram, and that was the point where the whole thing started to feel truly alien.

Instagram is social media built around images. On paper, I understand the appeal. People like visuals. People like documenting moments. People like sharing aesthetics. The problem isn’t the existence of photos, it’s what the platform encourages people to do with them.

The Instagram era felt like a cultural intensifier. It encouraged self-commodification, validation traps, and constant identity management centred around appearance. It rewarded the performance of a life, rather than the living of one. And it wasn’t just “look at this cool thing I did”, it increasingly became “look at me being the type of person who does cool things”.

I’ve seen people go to events purely to produce proof-of-attendance. Not to experience the event, but to capture the event. Not to have a night, but to extract a post.

And this is where the rebranding matters. “Social networking” didn’t shift into “social media” because people collectively decided they wanted something shallower. It became “social media” because platforms restructured online interaction into something that could be consumed, measured, targeted, and monetised. The design rewarded broadcasting over conversation, and performance over connection, and the culture followed the incentives.

Once that happened, the internet began to feel less like a network of people and more like a marketplace of identities.

The Artist Becomes a Product

As social media tightened its grip on everyday social life, it also tightened its grip on creative life. Somewhere along the way, there started becoming pressure on artists and musicians to promote themselves through social platforms, as if it was simply part of the job now.

If you wanted people to hear your music, you were expected to be present. To post constantly. To engage. To feed the algorithm. To build a “brand”. To become discoverable by playing the game.

I never successfully adopted that mindset, for a number of reasons. Partly because the workload felt absurd, especially for someone with ADHD and autism. The extra resources required to manage a constant online presence felt like an investment in something I was not good at, not interested in, and not capable of sustaining without burning out.

But more than that, it felt wrong.

I’m not comfortable pushing myself as a product. Even when I tried to treat it as “just marketing”, something in me resisted. It felt like I was being asked to flatten myself into something palatable and promotable, and then repeatedly present that version to the world until it started generating numbers.

At one point, my girlfriend even offered to manage my social media accounts for my music projects on my behalf, which was incredibly kind. But even then, it didn’t really work, because the problem wasn’t just the time commitment. The problem was what the whole process demanded of me.

It didn’t just want me to be an artist.

It wanted me to be a personality.

It wanted charisma as an output. It wanted a steady flow of “content” that was only partly related to the music itself. It wanted my life, my face, my social identity, my accessibility, my likeability, my relatability, all packaged into an ongoing performance alongside the creative work.

And if that is the price of visibility, then visibility starts to look less like opportunity and more like coercion.

I explored this more directly in my other articles The Independent Artist in the Age of Self Commodification and Art is NOT ‘Content’! because I don’t think this pressure is only damaging to artists. I think it is damaging to art itself. It changes what people make, how they present it, and what they feel allowed to be.

Going Viral as the New Goal

Somewhere along this timeline, and I struggle to pinpoint exactly when, “going viral” stopped being a rare accident and became a goal. Not just a possible path to attention, but the path that everyone was aiming for.

And when virality becomes the goal, content changes. Depth becomes a liability. Context becomes a burden. Nuance becomes inefficient. Intelligence becomes optional. The system rewards what spreads, not what’s true, not what’s meaningful, and not what’s human.

That’s why the rise of short-form video content, platforms like TikTok and everything that followed in its wake, felt like the endpoint of the process. I haven’t even bothered to explore it properly myself because I can already see what it represents from the outside. Communication reduced to bite-sized bursts designed to hijack attention, recycle trends, and keep people scrolling.

It all feels so shallow that it becomes hard to even call it “social” anymore.

To me, it looks like a world where people behave like idiots to entertain more idiots, because the reward structure is built around immediate reaction and maximum spread. And if that becomes the default route to success, it becomes obvious why culture itself starts to degrade. I’ve explored that wider attention economy more directly in Are You Paying Attention?, and the way platforms subtly train people out of nuance in Trained Not to Think: The Slow Death of Nuance.

At some point, the question stops being “Why don’t you use social media?”

And becomes “Why would you?”

Because once expression becomes exploitation, the healthiest move isn’t to optimise yourself for the system.

It’s to refuse the system entirely.