On the bus home, I overheard a parent talking to her children. I did not quite catch the piece of information she had given them, but the kids questioned it, as kids often do. Her reply made me pause:

“It’s true, ChatGPT says so!”

That simple sentence carries more weight than it might appear. It was not said as a joke. It was said with the tone of final authority. Not “I read it somewhere,” not “I think that is the case,” but “ChatGPT says so,” therefore unquestionable.

The problem with treating AI as truth

I use ChatGPT casually and often. I find it useful, I find it stimulating, I even find it creative. But it is not infallible. I have seen it throw out confident answers that are less than accurate. Sometimes the error is small, sometimes it is glaring. That is because at its core, ChatGPT is not a library or a fact checker. It is a probabilistic language model that predicts likely answers. It sounds authoritative, but sounding right is not the same thing as being right.

Most of the errors are not malicious. They come from the quirks of how AI is built: training data full of human errors, the tendency to fill in gaps with plausible sounding fiction, the limits of knowledge cut off dates. In the end, a wrong answer is still a wrong answer.

The deeper worry

The everyday mistakes are one thing. The bigger concern is what happens when society decides to place absolute trust in this technology. What happens when “ChatGPT says so” becomes the modern equivalent of “the newspaper says so,” or “the priest says so”?

Who controls the voice of AI? Already, the way models are tuned and filtered reflects the biases and priorities of those who own them. Today, that mostly means corporations trying to avoid lawsuits or public backlash. Tomorrow, it could mean governments steering the flow of truth itself.

A quiet dystopia in the making

It is not hard to imagine where this road leads:

- Manipulation by design: If AI becomes our main gateway to knowledge, its answers could be quietly weighted towards selling us certain products, services, or lifestyles. Imagine if every “neutral” recommendation subtly nudged us toward a sponsor’s brand.

- Steering public opinion: If authorities lean on AI providers to promote certain narratives, inconvenient truths could simply disappear. Instead of burning books, it may take only a few lines of code.

- Illusion of neutrality: Because AI sounds impartial, many will not notice the framing. “The algorithm says so” could become more persuasive than “the news says so.”

- Feedback loops of control: As people rely more on AI, its outputs shape popular thinking. Then the next model is trained partly on that shaped thinking, reinforcing the bias.

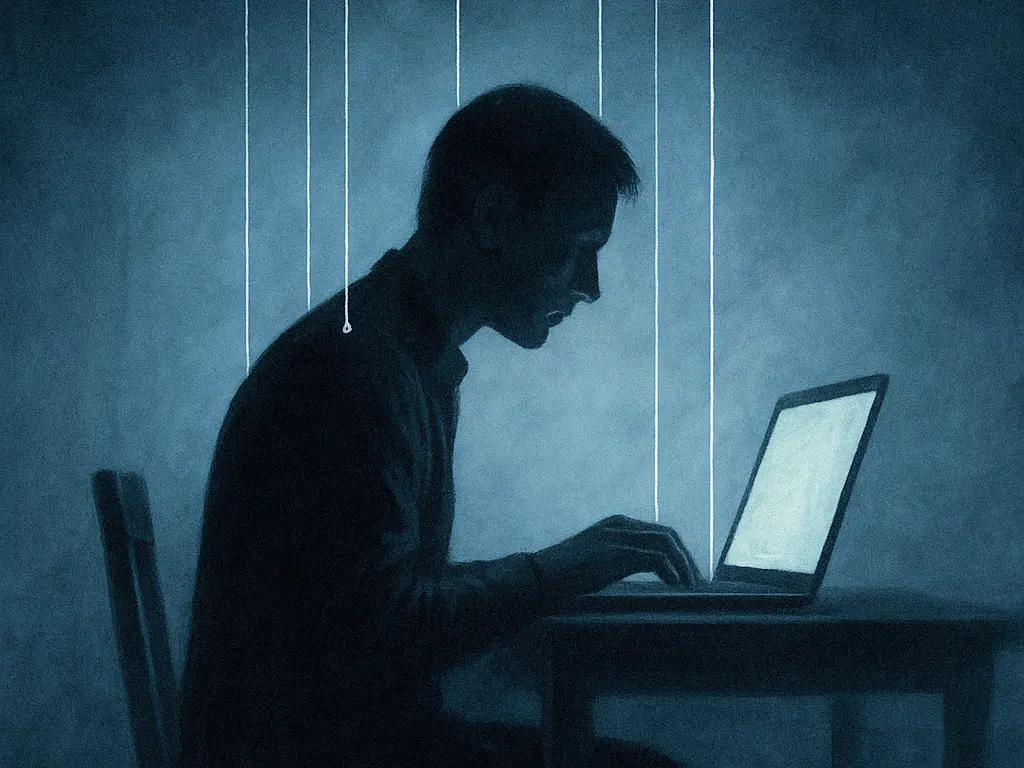

This would not look like a science fiction dictatorship with jackboots in the streets. It would feel comfortable, easy, polite. A velvet cage where questions stop being asked because the answers are always ready to hand.

What we need instead

AI can be a tool. It can be helpful, creative, and even liberating. But it must never be treated as an unquestionable authority. To prevent that slide, we need:

- Decentralisation: open source models that anyone can run and check.

- Transparency: clarity about how these systems are trained and filtered.

- Critical thinking: a culture where people are encouraged to question AI, not bow to it.

- Diversity of sources: books, journalism, lived experience, and human reasoning must remain part of the conversation.

AI is here to stay, and it will almost certainly become a central part of how we live and learn. But whether it becomes a tool of empowerment or a velvet cage of manipulation depends not only on the companies that build it, but on us: on how much we insist on questioning, cross checking, and keeping the human spirit of doubt alive.