I grew up in the 1980s, when television advertising still had a kind of charm. I remember the jingles, the mascots, the catchy slogans that managed to lodge themselves in your head for weeks. Even as a child, I knew they were trying to sell me something, but at least they did it with some flair. They felt like part of the entertainment itself.

Something has changed since then. Advertising is no longer something that interrupts culture; it has become the culture. Every space, every platform, and every idle moment now feels colonised by a hidden intention to sell. To understand how we arrived here, it is worth tracing how advertising has evolved from a loud street-side performance to an invisible system of persuasion that shapes our sense of self.

The Loud Salesmen

The earliest form of advertising was brutally honest. Ancient merchants shouted in markets, painted signs on walls, or hung banners above their stalls. When mass printing emerged in the 1800s, advertising became more widespread but no less direct. Newspapers were filled with promises of miracle tonics, soap that made you beautiful, and pills that cured everything from toothache to heartbreak. These were primitive, manipulative, and often fraudulent, but at least you knew what you were looking at. Someone was selling, and you were free to walk away.

The Mad Men Era

The 20th century transformed advertising into an art form. With the rise of radio and television, storytelling became the new language of persuasion. Campaigns no longer sold only a product; they sold an identity, a dream, a way of life. The Coca-Cola Santa Claus, the Marlboro Man, and the perfect suburban family all came from the same creative laboratories.

This was the era of the “ad man,” immortalised in cultural artefacts like Bewitched or later Mad Men. Advertising was portrayed as a glamorous profession. These were the people who didn’t just reflect society; they helped build it. The line between commerce and culture began to blur.

The 80s and 90s: Ads as Entertainment

By the 1980s and 1990s, advertising had taken on a theatrical quality. It was playful, colourful, and memorable. Mascots like Tony the Tiger, slogans like “Just Do It,” and tunes you could hum all day made adverts feel like short pieces of performance art. They were still manipulative, of course, but they wore their intentions openly.

Looking back, perhaps this is why many people from my generation recall old ads with a strange fondness. They were transparent. They worked hard to win your attention rather than simply steal it.

The Weird and Annoying Years

Somewhere in the late 1990s and early 2000s, advertising lost its balance. It became surreal, loud, and deliberately irritating. Think of Crazy Frog, the Budweiser frogs, or the unnerving Burger King mascot. Annoyance became a marketing tool. If something got stuck in your head, even out of frustration, the job was done.

This was the period when “going viral” became a goal before social media even existed. The absurdity was the message.

The Internet Disruption

When the internet arrived, advertising was clumsy but eager. Early banner ads were brightly coloured, flashing boxes that you could easily ignore. But the industry adapted quickly. As data collection improved, advertising became personal. It stopped shouting to the crowd and began whispering to the individual.

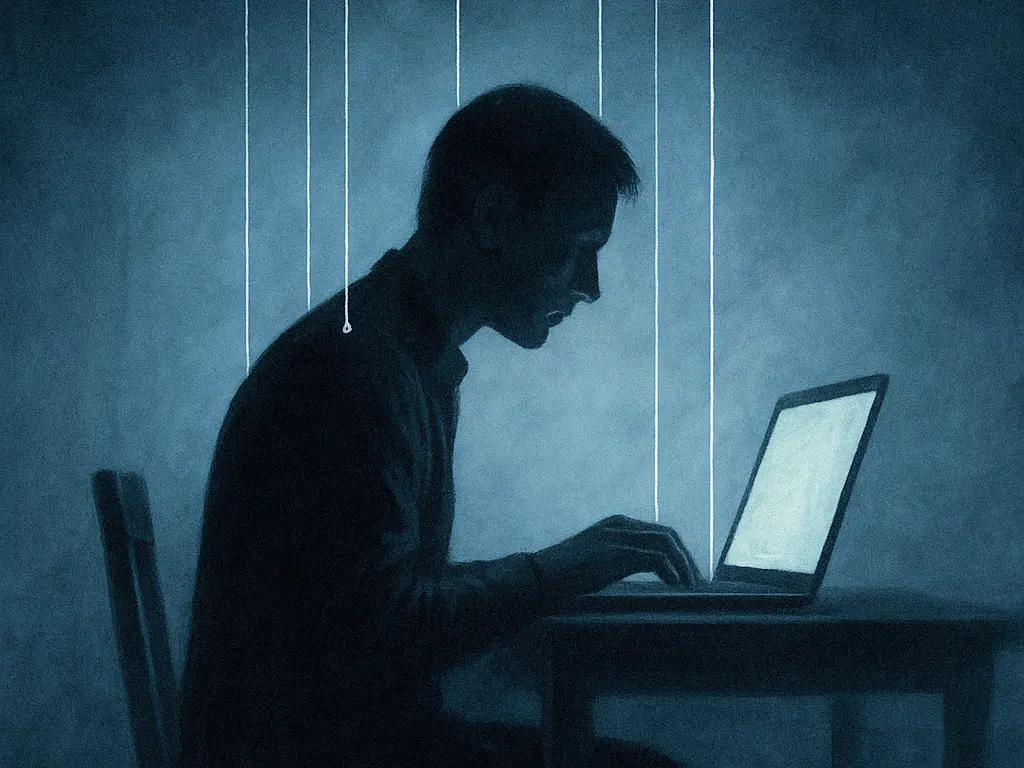

This marked the rise of surveillance capitalism. Every click, search, and pause became a data point. You were no longer a passive audience member; you were a psychological profile to be targeted. The salesman had followed you home and was now reading your mind.

The Age of Disguise

By the 2010s, advertising learned to hide in plain sight. Sponsored posts, influencer endorsements, and “native” content made it difficult to tell where information ended and manipulation began. Search engines, news sites, and social platforms quietly filled with ads disguised as genuine results.

South Park once parodied this perfectly with its storyline about intelligent ads (Season 19). It was satire, but it was also prophecy. Today, even image searches are littered with sponsored results. The ad no longer wants to be seen; it wants to be believed.

Culture as Commerce

This is the stage we now find ourselves in. Advertising has stopped orbiting culture and instead absorbed it completely. Everything is for sale, including identity itself.

People no longer ask “What do I like?” but “What do I subscribe to?” We define ourselves through brands and platforms: Apple or Android, Nike or Adidas, Netflix or Disney Plus. Even rebellion is commercialised. You can buy “authenticity,” but only if you can afford the price tag.

Advertising has achieved what no political ideology ever could. It has replaced meaning with marketing and turned culture into a series of brand alignments.

Conclusion: From Persuasion to Colonisation

Advertising began as a voice shouting in the marketplace. It evolved into storytelling, then spectacle, then infiltration. Today it is everywhere and nowhere, woven into the fabric of our reality.

The change that occurred over the last century is more than technological. It is philosophical. Advertising no longer sells products; it sells identities. It shapes our desires before we even know we have them.

Perhaps that is why so many of us feel weary. We are not just tired of being sold to; we are tired of living inside the sale itself.